ZFS dedup performance optimization

Olivia Zamora

Olivia Zamora

I'm running a VDI server. Many of the VDI users are installing the same apps on their desktops, so zfs set dedup=on vdipool/myDesktop comes in handy.

How can I optimize the performance impact of zfs dedup?

What performance dragdown can I expect?

Is there a way to assign a dedicated CPU thread to the dedup process, so that the rest of the system is only minimally impacted?

3 Answers

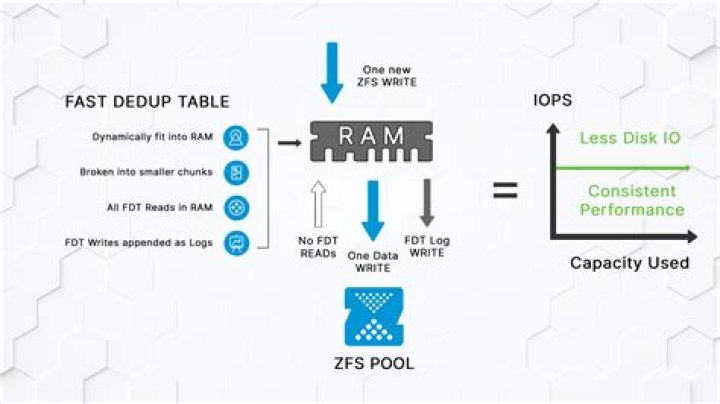

On 100% SSD storage, the performance impact might be bearable if you have enough RAM for 100% of your deduplication hash table to be in RAM all the time. Making sure you have enough RAM for all of that metadata is the only optimisation that can be made.

If you are on spinning rust, the extreme on-disk fragmentation is going to make the performance completely unusable.

In general, if you are not actually seeing at a minimum 10:1 deduplication ratio, it isn't worth the enormous performance impact.

2With the recent introduction of allocation classes (and "special" vdevs) you might have good enough performance with deduplication on a non-SSD pool, assuming you have a fast SSD to hold the metadata (which includes the deduplication data).

Before you add a "special" vdev, do some experiments, and get to know the feature. In my understanding, a failure of the "special" vdev takes the whole pool with it. Furthermore, you cannot remove a "special" vdev.

If duplicated blocks would happen completely randomly, on a quasi-level distribution, then yes, fragmentation would be a serious issue on HDD media. But in practical scenarios, not this happens. In practical scenarios, the overwhelming majority of the dupe blocks happen as the part of dupe (or very similar) files, resulting that they happen in bunches. Thus, it does not cause a serious fragmentation problem.

Beside that, the solution of the problem of the fragmentation is the defragmentation, and not to avoid using this very useful feature.

However, zfs has simply no defragmentation tool or feature. The only way to defrag a zfs volume is rebuilding it. Beside that, it has some licensing problems. But there is still a lot of work in it, and many people find it useful. Hopefully someone will once implement the defragmentation (maybe even online defrag!), until then we should honor what we have.

0