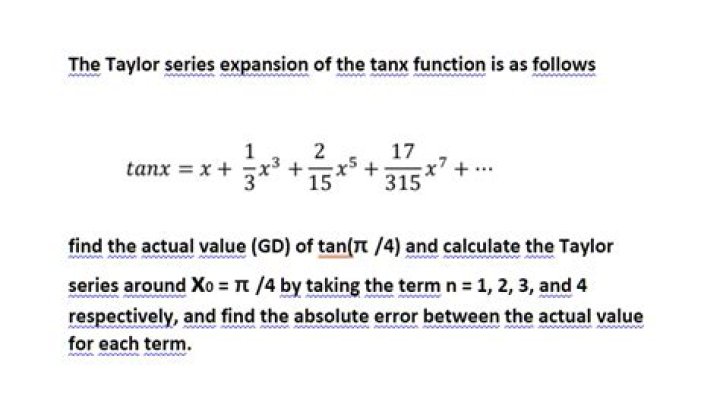

Rigorous proof of the Taylor expansions of sin $x$ and cos $x$

Matthew Barrera

Matthew Barrera

We learn trigonometric functions in high school, but their treatment is not rigorous. Then we learn that they can be defined by power series in a college. I think there is a gap between the two. I'd like to fill in the gap in the following way.

Consider the upper right quarter of the unit circle $C$ = {$(x, y) \in \mathbb{R}^2$; $x^2 + y^2 = 1$, $0 \leq x \leq 1$, $y \geq 0$}. Let $\theta$ be the arc length of $C$ from $x = 0$ to $x$, where $0 \leq x \leq 1$. By the arc length formula, $\theta$ can be expressed by a definite integral of a simple algebraic function from 0 to $x$. Clearly $\sin \theta = x$ and $\cos\theta = \sqrt{1 - \sin^2 \theta}$. Then how do we prove that the Taylor expansions of $\sin\theta$ and $\cos\theta$ are the usual ones?

$\endgroup$ 65 Answers

$\begingroup$This is what I consider the 'standard proof' for $\sin (x)$, and the proof that I would give tomorrow if suddenly asked of me. ($\cos(x)$ is more or less identical)

- Recall Taylor's Theorem. If we want to pay a bit more attention to the basis of the proof, then Taylor's Theorem can be proven from the mean value theorem.

- Recall that the derivative of $\sin (x)$ is $\cos (x)$, and that the derivative of $\cos(x)$ is $-\sin (x)$. These are often done geometrically.

- Note that successive derivatives of $\sin$ look like $\cos(x), -\sin(x), -\cos(x), \sin(x), ...$ cyclically.

- Use Taylor's Theorem to expand $\sin(x)$ around $0$.

- We have to check to see that the expansion we've attained actually converges to $\sin(x)$. To do this, we'll use the Lagrange form of the remainder, which is obtained during the standard proofs of Taylor's Theorem. The trick here is that the $k$th remainder term is always bounded in absolute value by $\dfrac{x^{k+1}}{(k+1)!}$ because the successive derivatives are always just a $\sin$ or $\cos$ (up to sign). It's easy to see that for any $x$, as $k \to \infty$, this error term goes to $0$. Thus the expansion does converge to $\sin(x)$.

- Repeat for $\cos(x)$ if desired.

Since $x^2 + y^2 = 1$, $y = \sqrt{1 - x^2}$,

$y' = \frac{-x}{\sqrt{1 - x^2}}$

By the arc length formula,

$\theta = \int_{0}^{x} \sqrt{1 + y'^2} dx = \int_{0}^{x} \frac{1}{\sqrt{1 - x^2}} dx$

We consider this integral on the interval [$-1, 1$] instead of [$0, 1$]. Then $\theta$ is a monotone strictly increasing function of $x$ on [$-1, 1$]. Hence $\theta$ has the inverse function defined on [$\frac{-\pi}{2}, \frac{\pi}{2}$]. We denote this function also by $\sin\theta$. We redefine $\cos\theta = \sqrt{1 - \sin^2 \theta}$ on [$\frac{-\pi}{2}, \frac{\pi}{2}$].

Since $\frac{d\theta}{dx} = \frac{1}{\sqrt{1 - x^2}}$,

(sin $\theta)' = \frac{dx}{d\theta} = \sqrt{1 - x^2} =$ cos $\theta$.

On the other hand, $(\cos\theta)' = \frac{d\sqrt{1 - x^2}}{d\theta} = \frac{d\sqrt{1 - x^2}}{dx} \frac{dx}{d\theta} = \frac{-x}{\sqrt{1 - x^2}} \sqrt{1 - x^2} = -x = -\sin\theta$

Hence

$(\sin\theta)'' = (\cos\theta)' = -\sin\theta$

$(\cos\theta)'' = -(\sin\theta)' = -\cos\theta$

Hence by the induction on $n$,

$(\sin\theta)^{(2n)} = (-1)^n\sin\theta$

$(\sin\theta)^{(2n+1)} = (-1)^n\cos\theta$

$(\cos\theta)^{(2n)} = (-1)^n\cos\theta$

$(\cos\theta)^{(2n+1)} = (-1)^{n+1}\sin\theta$

Since $\sin 0 = 0, \cos 0 = 1$,

$(\sin\theta)^{(2n)}(0) = 0$

$(\sin\theta)^{(2n+1)}(0) = (-1)^n$

$(\cos\theta)^{(2n)}(0) = (-1)^n$

$(\cos\theta)^{(2n+1)}(0) = 0$

Note that $|\sin\theta| \le 1$, $|\cos\theta| \le 1$.

Hence, by Taylor's theorem,

$\sin\theta = \sum_{n=0}^{\infty} (-1)^n \frac{\theta^{2n+1}}{(2n+1)!}$

$\cos\theta = \sum_{n=0}^{\infty} (-1)^n \frac{\theta^{2n}}{(2n)!}$

QED

Remark:

When you consider the arc length of the lemniscate instead of the circle, you will encounter $\int_{0}^{x} \frac{1}{\sqrt{1 - x^4}} dx$. You may find interesting functions like we did with $\int_{0}^{x} \frac{1}{\sqrt{1 - x^2}} dx$. This was young Gauss's approach and he found elliptic functions.

$\endgroup$ $\begingroup$One way to fill the gap is by using the theory of differential equations: First the derivatives of sin and cos can be geometrically established. For a very rigorous proof, one should start from a very advanced presentation of elementary geometry, define an angle in a very precise way, define a function which measures the angles,(all this is done in this book) then go on to establish the addition formulae and the derivatives. For details you can consult any good book on calculus such as the one by Apostol. Hence it can be verified that $\sin$ satisfies the differential equation $y''+y=0, y(0)=0, y'(0)=1$.

Now by the method of power series of solving differential equations we find that the solution of the above differential equation is an infinite series $\sum_{n=0}^\infty \frac{(-1)^n x^{2n+1}}{(2n+1)!}$. By the existence and uniqueness theorem of differential equations there should only be one solution so $\sin (x) =\sum_{n=0}^\infty \frac{(-1)^n x^{2n+1}}{(2n+1)!}$ Furthermore since power series are unique so this is also the Taylor series.

$\endgroup$ $\begingroup$there is a simplified elementary derivation of the power series without the use of Taylor Series. It can be done through the expansion of the multiple angle formula. See paper by David Bhatt, “Elementary Derivation of Sine and Cosine Series”, Bulletin of the Marathwada Mathematical Society, 9(2) 2008, 10–12

$\endgroup$ $\begingroup$I don't feel like davidlowriduda's answer gives a very rigorous proof. Some of this is just guesswork on what might be going on in the mathematical community. It doesn't use real number induction. Also, I feel like Taylor's theorem is too strong a statement. I also feel like it's too strong a statement to assume that $\sin$ and $\cos$ are analytic. You might not understand what I mean by that but that's okey because you will probably be able to understand the rest of the answer anyway and that might make the answer too long for you.

Some people don't even know how the real numbers themselves work. Here's one possible simple definition of the real numbers. Start with the integers. Next we can construct all the numbers that can be gotten by starting from an integer then dividing by 2 as many times as you want. Those are the dyadic rational numbers which I will denote $\mathbb{D}$. A Dedekind cut of the dyadic rational numbers is a two element set of subsets of $\mathbb{D}$ that satisfies the following conditions

- Every element of $\mathbb{D}$ is an element of one of those sets and not the other

- Both of those sets are nonempty

- One of the sets has the property that all its elements are larger than all the elements of the other set

Some Dedekind cuts of $\mathbb{D}$ have the property that the lower part has no maximal element nor does the higher part have a minimal element. When that's the case, we invent another number to lie between the elements of one element of the Dedekind cut and all the elements of the other element of the Dedekind cut. Now we're done. We can also invent addition, multiplication, and inequality on them in the intuitive way. Technically, the community probably agreed to define the real numbers with 1, 0, +, ×, and ≤ to be any complete ordered field. Although that's not actually how it was defined, a complete ordered field is an ordered sextuplet $(\mathbb{R}, 0, 1, +, \times, \leq)$ where $\mathbb{R}$ is a set, 0 and 1 are any elements of it, + and $\times$ are binary operations on it, and $\leq$ is a relation on it having the same structure as the sextuplet $(\mathbb{R}, 0, 1, +, \times, \leq)$ I already constructed. Defining a notation for each real number is a whole other story. There are other number systems such as a hyperreal number system where you can have numbers that are infinitely close to another number but not exactly equal to it. Suppose you have a subset $S$ of $\mathbb{R}$ satisfying the following properties:

- $0 \in S$

- $\forall x \in S\exists \epsilon \in \mathbb{R} (\epsilon > 0 \land \forall y \in \mathbb{R}((y > 0 \land y < \epsilon) \implies x + y \in S))$

- $\forall x \in \mathbb{R}(\exists \epsilon \in \mathbb{R} (\epsilon > 0 \land \forall y \in \mathbb{R}((y > x - \epsilon \land y < x) \implies y \in S))) \implies x \in S$

Then it can be shown that all nonnegative real numbers are in $S$. I think that's one possible way to define real number induction. Note that in the rational number system or the hyperreal number system, that kind of induction doesn't work. Given any continuous function $f$ from $\mathbb{R}$ to $\mathbb{R}$, the integral $F(x) = \int_{0}^xf(t)$ actually was not defined to be an antiderivative. However, it can be shown to be an antiderivative. Using real number induction, it can be shown that all antiderivatives of it are that function plus a constant. I think some authors just state $\sin$ and $\cos$ in terms of a circle but they didn't say what a circle was. As described in this answer, there is an actual reason, the distance formula was defined to be $d((x, y), (z, w)) = \sqrt{(z - x)^2 + (w - y)^2}$ and one of the reasons actually was because there is another entirely different method of defining $\sin$ and $\cos$ using the differential equations

- $\cos(0) = 0$

- $\sin(0) = 0$

- $\forall x \in \mathbb{R}\cos'(x) = -\sin(x)$

- $\forall x \in \mathbb{R}\sin'(x) = \cos(x)$

Using real number induction, this uniquely determines $\sin$ and $\cos$. Since it's unique, if I find any two functions and show that they satisfy the same differential equations, that means those functions are $\sin$ and $\cos$. Let $f(x) = \sum_{i = 0}^\infty \frac{x^{2i}}{2i!}$ for all real numbers $x$ and let $g(x) = \sum_{i = 0}^\infty \frac{x^{2i + 1}}{(2i + 1)!}$. Then it can be shown that they both have an infinite radius of convergence and that

- $f(0) = 1$

- $g(0) = 0$

- $\forall x \in \mathbb{R}f'(x) = -g(x)$

- $\forall x \in \mathbb{R}g'(x) = f(x)$

Thus $\forall x \in \mathbb{R}f(x) = \cos(x)$ and $g(x) = \sin(x)$.

If you don't want to use the fact that $\sin$ is the unique antiderivative of $\cos$ that assigns 0 to 0 and $-\cos$ is the unique antiderivative of $\sin$ that assigns 1 to 0 because it's so hard to prove, you could instead define $\sin$ and $\cos$ such that $\sin$ is an integral of $\cos$ and $-\cos$ is an integral of $\sin$. If you then define $f$ and $g$ as a power series exactly the way I did before, it can be shown that $g$ is an integral of $f$ and $-f$ is an integral of $g$. Thus $f$ satisfies the new definition of $\cos$ and $g$ satisfies the new definition of $\sin$.

I myself have an open mind that $\mathbb{R}$ is a proper class. I also don't believe real number induction is the real reason for the observations we make. I think one possible theory is that the fundamental laws of the universe are a 1-dimensional Conway's game of life that's only finite sized from the start whose grid keeps expanding with time and whose laws are different at the edges and simulates a universe that includes a lot of real numbers some of which are irrational but only countably many real numbers, all the ones that can be gotten by a certain type of complex process. Yet the observations will always be the way we can deduce from the assumption that the universe includes all real numbers and satisfies real number induction even though it doesn't actually include all of them.

Sources:

$\endgroup$