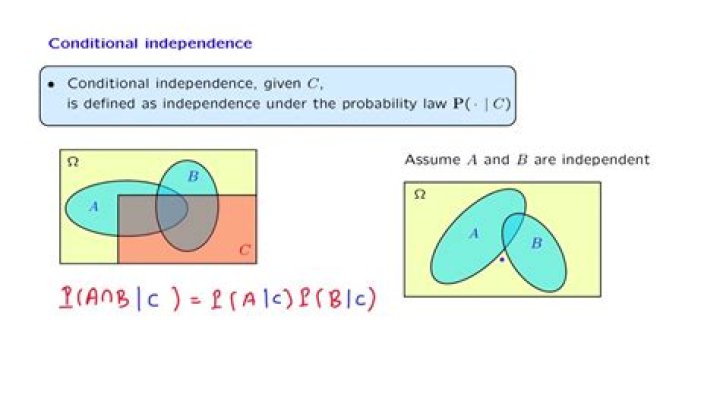

Properties of independence and conditional independence

Mia Lopez

Mia Lopez

Recently, I see some properties from conditional independence wiki page

I don't quite understand the properties of "Rules of conditional independence" in the wiki page.

Definition: $X\perp A$ means random variables $A$ and $X$ are independent from each other. $X\perp A\ |\ B$ means random variables $A$ and $X$ are conditionally independent given random variable $B$.

Question 1: how to prove "Contraction-weak-union-decomposition" property as follows: \begin{equation*} \left. \begin{array}{rl} X\perp A\ |\ B\\ X\perp B \end{array} \right\} and\ \ \ \ \ \ \Leftrightarrow \ \ \ \ \ \ X\perp A,B \ \ \ \ \ \ \Rightarrow \ \ \ \ \ \ and\left\{ \begin{array}{ll} X\perp A\ |\ B\\ X\perp B\\ X\perp B\ |\ A\\ X\perp A \end{array} \right. \end{equation*}

Question 2: how to prove the "Intersection" rule as follows: \begin{equation*} \left. \begin{array}{rl} X\perp A\ |\ B\\ X\perp B\ |\ A \end{array} \right\} and\ \ \ \ \ \ \Rightarrow \ \ \ \ \ \ X\perp A,B \end{equation*}

Question 3: Is the inverse proposition of "Decomposition" rule still true? how to comprehend it, please give me an example.

Original proposition:\begin{equation*} X\perp A,B\ \ \ \ \ \ \Rightarrow \ \ \ \ \ \ and \left\{ \begin{array}{rl} X\perp A\\ X\perp B \end{array} \right. \end{equation*}Inverse proposition:\begin{equation*} \left. \begin{array}{rl} X\perp A\\ X\perp B \end{array} \right\} and\ \ \ \ \ \ \Rightarrow \ \ \ \ \ \ X\perp A,B \end{equation*} Thanks very much!

$\endgroup$ 42 Answers

$\begingroup$Here is the proof of Question 1:

first arrow, left to right: \begin{eqnarray} P(X, A, B) & = & P(X, A\ |\ B)P(B)\\ & = & P(X\ |\ B)P(A\ |\ B)P(B)\ \ \ \ \ because\ X\ and\ A\ are\ conditionally\ independent\ on\ B\\ & = & P(X\ |\ B)P(AB)\\ & = & P(X)P(AB)\ \ \ \ \ because\ X\ and\ B\ are\ independent \end{eqnarray} first arrow, right to left: \begin{eqnarray} P(X, A, B) & = & P(X)P(A,B)\ \ \ \ \ because\ X\ and\ A,B\ are\ indepentdent\\ \int_BP(X, A, B) & = & \int_BP(X)P(A,B)\\ P(X,A) & = & P(X)P(A) \end{eqnarray} Hence, $X\perp A$, similarly $X\perp B$. \begin{eqnarray} P(X, A\ |\ B) & = & \frac{P(X,A,B)}{P(B)}\\ &=&\frac{P(X)P(AB)}{P(B)}\ \ \ \ \ because\ X\ and\ A,B\ are\ indepentdent\\ &=&P(X)P(A\ |\ B)\\ &=&P(X\ |\ B)P(A\ |\ B)\ \ \ \ \ because\ X\ and\ B\ are\ indepentdent \end{eqnarray} Hence, $X\perp A\ |\ B$

second arrow: we can obtain that from the first arraw.

$\endgroup$ 9 $\begingroup$The above answer and comments give proofs for Q1 and Q2. For Q3, the answer is no:

Let A, B be two independent Bernoulli random variables $\mathcal{B}(\frac{1}{2})$ (e.g. representing unbiased coin flips), and let $X = A \oplus B$ (i.e. $X = 1$ whenever $A \neq B$, $X=0$ otherwise).

Then for $x, a \in \{0,1\}$:

$P(X=x, A=a) = P(B=|x-a|, A=a) = 1/2 \cdot 1/2 = P(X=x) \cdot P(A=a)$.

Therefore, $X⊥A$. Similarly, $X⊥B$, but since $X$ is entirely known if we know $A$ and $B$, we do not have $X⊥A,B$.

$\endgroup$