Proof that columns of an invertible matrix are linearly independent

Matthew Martinez

Matthew Martinez

Explain why the columns of an $n \times n$ matrix $A$ are linearly independent when $A$ is invertible.

The proof that I thought of was:

If $A$ is invertible, then $A \sim I$ ($A$ is row equivalent to the identity matrix). Therefore, $A$ has $n$ pivots, one in each column, which means that the columns of $A$ are linearly independent.

The proof that was provided was:

Suppose $A$ is invertible. Therefore the equation $Ax = 0$ has only one solution, namely, the zero solution. This means that the columns of $A$ are linearly independent.

I am not sure whether or not my proof is correct. If it is, would there be a reason to prefer one proof over the other?

As seen in the Wikipedia article and in Linear Algebra and Its Applications, $\sim$ indicates row equivalence between matrices.

$\endgroup$ 66 Answers

$\begingroup$I would say that the textbook's proof is better because it proves what needs to be proven without using facts about row-operations along the way. To see that this is the case, it may help to write out all of the definitions at work here, and all the facts that get used along the way.

Definitions:

- $A$ is invertible if there exists a matrix $A^{-1}$ such that $AA^{-1} = A^{-1}A = I$

- The vectors $v_1,\dots,v_n$ are linearly independent if the only solution to $x_1v_1 + \cdots + x_n v_n = 0$ (with $x_i \in \Bbb R$) is $x_1 = \cdots = x_n = 0$.

Textbook Proof:

Fact: With $v_1,\dots,v_n$ referring to the columns of $A$, the equation $x_1v_1 + \cdots + x_n v_n = 0$ can be rewritten as $Ax = 0$. (This is true by definition of matrix multiplication)

Now, suppose that $A$ is invertible. We want to show that the only solution to $Ax = 0$ is $x = 0$ (and by the above fact, we'll have proven the statement).

Multiplying both sides by $A^{-1}$ gives us $$ Ax = 0 \implies A^{-1}Ax = A^{-1}0 \implies x = 0 $$ So, we may indeed state that the only $x$ with $Ax = 0$ is the vector $x = 0$.

Your Proof:

Fact: With $v_1,\dots,v_n$ referring to the columns of $A$, the equation $x_1v_1 + \cdots + x_n v_n = 0$ can be rewritten as $Ax = 0$. (This is true by definition of matrix multiplication)

Fact: If $A$ is invertible, then $A$ is row-equivalent to the identity matrix.

Fact: If $R$ is the row-reduced version of $A$, then $R$ and $A$ have the same nullspace. That is, $Rx = 0$ and $Ax = 0$ have the same solutions

From the above facts, we conclude that if $A$ is invertible, then $A$ is row-equivalent to $I$. Since the columns of $I$ are linearly independent, the columns of $A$ must be linearly independent.

$\endgroup$ $\begingroup$It might be worth pointing out a fascinating proof in the book Algebra by Michael Artin that

The columns of a square matrix $A$ are linearly independent if and only if $A$ is invertible.

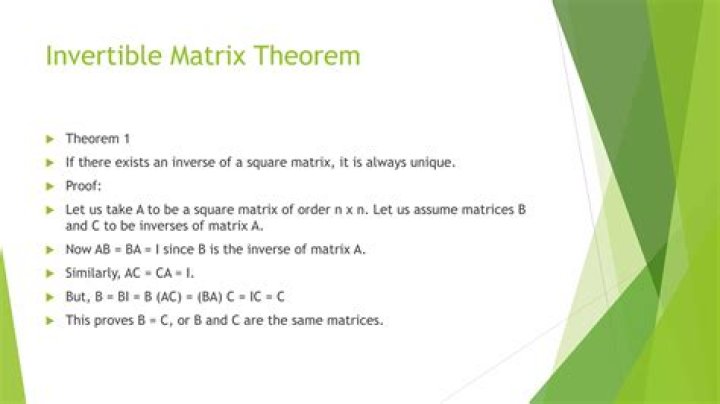

The proof proceeds by circularly proving the following chain of implications: (a) $\implies$ (b) $\implies$ (c) $\implies$ (d) $\implies$ (a). All four conditions from (a) to (d) are therefore equivalent.

(a) $A$ can be reduced to the identity by a sequence of elementary row operations.

(b) $A$ is a product of elementary matrices.

(c) $A$ is invertible.

(d) $AX = 0$ has only the trivial solution $X = 0$.

$\endgroup$ $\begingroup$we want to proove that A is invertible if the column vectors of A are linearly independent. we know that if A is invertible than rref of A is an identity matrix so the row vectors of A are linearly independent.

And we also know that if A is invertible than transpose of A is also invertible that means that the row vectors of transpose of A are linearly independent so the column vectors of A are linearly independent.

$\endgroup$ $\begingroup$If the columns of $A$ ,i.e $C_i$ are linearly independent for $i = 1, \ldots, n$. Thus,{$C_1$,$C_2$,..,$C_n$} is a basis of $R^n$. If $E_i$ for $i=1, \ldots, n$ are the standard basis for $R^n$ then we can say that $$E_i=C_1 B_{1i} + C_2 B_{2i} +\ldots + C_n B_{ni} ; \tag A$$ for a unique set of constants $B_{ji}$ for $j = 1, \ldots, n$. (In short, any standard basis of $R^n$ is a unique linear combination of the basis vectors $C_1$,$C_2$,..,$C_n$ of $R^n$.)

Using the standard basis of $R^n$ and elements $A_{ij}$ of matrix $A$, we know that any column of matrix $A$ can be written as $$C_i = A_{1i} E_1 + A_{2i} E_2 + \ldots + A_{ni} E_n ;\tag B$$therefore substituting (B) in (A);

$$E_i = (A_{11}E_1 + \ldots + A_{n1}E_n)B_{1i} + (A_{12}E_1 + \ldots + A_{n2}E_n)B_{2i} +\ldots + (A_{1n}E_1 + \ldots + A_{nn}E_n) B_{ni} $$

$$E_i = (A_{11}B_{1i} +\ldots + A_{1n}B_{ni})E_1 +\ldots +(A_{i1}B_{1i} +\ldots+ A_{in}B_{ni})E_i +\ldots +(A_{n1}B_{1i} +\ldots + A_{nn}B_{ni}) E_n \tag{C}$$

Comparing LHS and RHS its evident that

$$(A_{i1}B_{1i} +\ldots + A_{in}B_{ni})=1 \text{ for } i = 1, \ldots, n. \tag{D}$$

whereas

$$(A_{j1}B_{1i} +\ldots + A_{jn}B_{ni})=0 \text{ for } j \ne i \tag{E}$$

If $B=(B_{ij})$ is a matrix then from $(B),(D),(E)$ we have $AB=I$ and hence $B$ is the inverse of $A$.

(note: this proof uses no assumptions of row or column transformations or determinants as well but uses the only one simple fact of linear independence between the basis vectors and standard basis vectors. )

$\endgroup$ 1 $\begingroup$I would put it concisely as follows$$ \eqalign{ & {\bf A}\,{\rm invertible}\quad \Leftrightarrow \quad \det \left( {\bf A} \right) \ne 0\quad \Leftrightarrow \cr & \left( \matrix{ \Leftrightarrow \quad \,{\bf x}^T {\bf A} = {\bf 0}\; \Rightarrow \;{\bf x}^T = {\bf 0}\quad \Leftrightarrow \hfill \cr \Leftrightarrow \quad {\bf A}\,{\bf x} = {\bf 0}\; \Rightarrow \;{\bf x} = {\bf 0}\quad \Leftrightarrow \hfill \cr} \right) \cr & \Leftrightarrow \quad {\rm columns}\,{\rm (and}\,{\rm rows)}\,{\rm of}\,{\bf A}\,{\rm linearly}\,{\rm independent} \cr} $$

$\endgroup$ $\begingroup$I’ll use rows instead of columns, but the argument for columns holds similarly. Suppose a square matrix A is invertible that means it is row equivalent to the identity matrix. Now, observing that the rows of the identity are linearly independent, you can reapply the reverse operations on the rows of the identity to get the rows of A, this shows that the rows of A are linearly independent.

Suppose the rows of A are linearly independent. Applying row operations on the rows of A until you have a reduced echelon matrix, will give you a matrix with no zero rows and hence it must be the identity. Therefore A is row equivalent to the identity and so A is invertible.

$\endgroup$