`pd.concat` with `join=='inner'` doesn't produce intersection of pandas dataframes

Sophia Terry

Sophia Terry

I am trying to extract common rows from several dataframes using pd.concat:

>>> import numpy as np

>>> import pandas as pd

>>> x = np.random.random(size=(5, 3))

>>> df1 = pd.DataFrame(x)

>>> df2 = pd.DataFrame(x[1:3])

>>> df3 = pd.DataFrame(x[2:4])

>>> df1 0 1 2

0 0.257662 0.453542 0.805230

1 0.060493 0.463148 0.715994

2 0.452379 0.470137 0.965709

3 0.447546 0.964252 0.163247

4 0.187212 0.973557 0.871090

>>> df2 0 1 2

0 0.060493 0.463148 0.715994

1 0.452379 0.470137 0.965709

>>> df3 0 1 2

0 0.452379 0.470137 0.965709

1 0.447546 0.964252 0.163247As you can see, only the row 0.452379 0.470137 0.965709 is common to all three dataframes. To extract it, I tried:

>>> pd.concat([df1, df2, df3], join='inner') 0 1 2

0 0.257662 0.453542 0.805230

1 0.060493 0.463148 0.715994

2 0.452379 0.470137 0.965709

3 0.447546 0.964252 0.163247

4 0.187212 0.973557 0.871090

0 0.060493 0.463148 0.715994

1 0.452379 0.470137 0.965709

0 0.452379 0.470137 0.965709

1 0.447546 0.964252 0.163247Thus, join==inner doesn't seem to work! I should also point out that ignore_index=True has no effect on the behavior. In an article on Real Python, using axis=1 is suggested. However, it is wrong in my opinion:

>>> pd.concat([df1, df2, df3], join='inner', axis=1) 0 1 2 0 1 2 0 1 2

0 0.257662 0.453542 0.805230 0.060493 0.463148 0.715994 0.452379 0.470137 0.965709

1 0.060493 0.463148 0.715994 0.452379 0.470137 0.965709 0.447546 0.964252 0.163247What is wrong with what I am doing? Also, how would I extract common rows from several dataframes if this way doesn't work? I am using Pandas version 0.25.3.

25 Answers

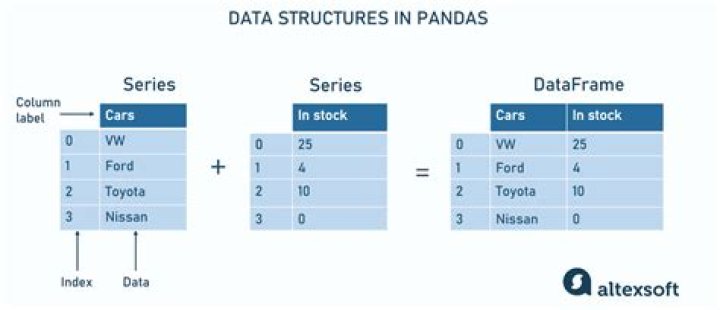

In short, go with reduce(lambda left,right: pd.merge(left,right,on=cols), dfs),

(see Method #2 - make sure to include from functools import reduce), but please see an explanation for pd.concat (Method #1):

Method #1 (concat): I think the most dynamic, robust pd.concat way (of the ways I've tried with concat specifically) is to use. The only major benefit of this solution over the second method below is that you don't have to use an additional library; however, I think you could also write similar code with merge without having to use another library:

dfs = [df1, df2, df3]

cols = [*df1.columns] # enclosing with [*] is the same as tolist()

for df in dfs: df.set_index(cols, inplace=True) # can only use inplace when looping through dfs (at least using my simpler method)

pd.concat(dfs, join='inner', axis=1).reset_index() # see below paragraph for explanation

Out[1]: 0 1 2

0 0.452379 0.470137 0.965709Please note that join='inner' means you are joining on the index NOT the unique rows. Also, join only matters if you pass axis=1, which is why effectively nothing happens.

Method #2: (merge with reduce):

@Anky pointed out that how='inner' is default with merge. This was actually the first answer I posted, but I got confused about expected output and went full circle. Please see the simplest answer below:

from functools import reduce

dfs = [df1, df2, df3]

cols = [*df1.columns]

reduce(lambda left,right: pd.merge(left,right,on=cols), dfs)

Out[2]: 0 1 2

0 0.452379 0.470137 0.965709If you are attempting to look for common rows:

temp = pd.concat([df1, df2, df3])

temp[temp.duplicated()]I'm sure there is a more elegant solution to this, however.

1Try this,

df = pd.merge(df1, df2, how='inner', on=[col1, col2, col3])# add extral tag column

df_list = [df1, df2, df3]

for i, dfi in enumerate(df_list): dfi['tag'] = i + 1

# merge DataFrame

df = pd.concat([df1, df2, df3], ignore_index=True)

# find the duplicates rows

cols = df.columns[:-1].tolist()

cond = df[cols].duplicated(keep=False)

obj = df[cond].groupby(cols)['tag'].agg(tuple)

# filter

cond = obj.map(len) == len(df_list)

obj[cond]obj example:

# 0 1 2

# 0.148080 0.837398 0.565498 (1, 3)

# 0.572673 0.256735 0.620923 (1, 2, 3)

# 0.822542 0.856137 0.645639 (1, 2)

# Name: tag, dtype: objectIn a similar fashion to what @Ajay A said,

import numpy as np

import pandas as pd

x = np.random.random(size=(5, 3))

df1 = pd.DataFrame(x)

df2 = pd.DataFrame(x[1:3])

df3 = pd.DataFrame(x[2:4])Then,

df1

Out[22]: 0 1 2

0 0.845894 0.530659 0.629198

1 0.697229 0.225557 0.314540

2 0.972633 0.685077 0.191109

3 0.069966 0.961317 0.352933

4 0.176633 0.663602 0.235032

df2

Out[23]: 0 1 2

0 0.697229 0.225557 0.314540

1 0.972633 0.685077 0.191109

df3

Out[24]: 0 1 2

0 0.972633 0.685077 0.191109

1 0.069966 0.961317 0.352933Then you can use pd.merge with how='inner'

pd.merge(df2, df3, how='inner')

Out[25]: 0 1 2

0 0.972633 0.685077 0.191109or if what you are looking for is soing the intersection of the three,

pd.merge(pd.merge(df1,df2,how='inner'), df3, how='inner')

Out[26]: 0 1 2

0 0.972633 0.685077 0.191109Use a for loop to handle a df_list.

df_list = [df1, df2, df3]

df_intersection = df1

for df in df_list[1:]: df_intersection = pd.merge(df_intersection, df, how='inner')