numpy.dot for dimensions > 2

Sophia Terry

Sophia Terry

I am trying to understand how dot product works for dimensions more than 2.

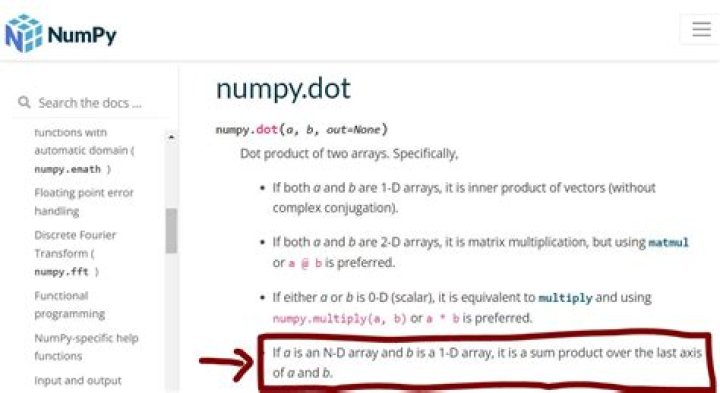

The documentation says:

If a is an N-D array and b is an M-D array (where M>=2), it is a sum product over the last # axis of a and the second-to-last axis of b:

dot(a, b)[i,j,k,m] = sum(a[i,j,:] * b[k,:,m])

I don't understand this rule -- where does it come from? Why is it the last axis of a and the second-to-last axis of b?

34 Answers

It may be easier to visualize this using the notation of np.einsum. Start with regular 2D matrix multiplication, which is pretty unambiguous, and follows "normal math" rules:

a = np.ones((2, 3))

b = np.ones((3, 4))

np.einsum('ij,jk->ik', a, b) # Same as a.dot(b)Now prepend a few dimensions:

a = np.ones((2, 3, 4, 5))

b = np.ones((8, 7, 5, 6))

np.einsum('ijkl,nolp->ijknop', a, b) # Same as a.dot(b)In general, a multidimensional array can be thought of as a stack of matrices. There are a number of reasons that numpy generally places the "elements" of an array, whether features, samples, vectors, or matrices along the last dimensions. So, regardless of dot, it is fairly standard to think of a as a 2x3 array of 4x5 matrices. Similarly, b is a 8x7 array of 5x6 matrices.

There are two options going forward. The last two dimensions of the result are unambiguous no matter what choice you make: the output will contain 4x6 matrices. You can compute all the possible combinations of 2x3x8x7 such matrices, or you can expect the leading dimensions to broadcast together. np.dot takes the former approach, while @/np.matmul takes the latter. Anecdotally, I've found that most people will find the latter more intitive.

The exact reason that np.dot computes the matrix product of all possible combinations of a and b is known only to the developers making that choice. It likely revolves around not wanting to raise an error, and the rules of broadcasting not being as universally cemented at the time dot was first implemented. Once you decide to take the products of all the combinations, you can decide to do it like this:

np.einsum('ijkl,nolp->ijnokp', a, b) # Not `np.dot`On the one hand, this would reflect the idea of a 2x3x8x7 array of 4x6 matrices better. On the other hand, it creates an awkward permutation of dimensions with k out of place in the sequence. The developers went with the arguably less awkward approach of grouping the dimensions from each array separately.

You can always a transpose an array to the shape you want after all.

Standard matrix multiplication definition:

Which is equivalent to:

dot(a, b)[i,j] = sum(a[i,:] * b[:,j])When including more dimensions, we still sum over the last axis of a and second-last axis of b.

dot(a, b)[a1,a2,..., b1,b2,..., i,j] = sum( a[a1,a2,...,i,:] * b[b1,b2,...,:,j]

)In some sense, the additional dimensions for a and b can each be imagined as a "multi-dimensional array" of many matrices. And dot performs as many standard matrix multiplications as it can with the two "multi-dimensional arrays" of many matrices.

Here's my best attempt at making sense of the rule.

Suppose that a is a p1 x ... x pm-1 x pm array and that b is a q1 x ... x qn-1 x qn array. The dot function seems to be interpreting a as an p1 x ... x pm-2 array of pm-1 x pm matrices, and b as a q1 x ... x qn-2 array of qn-1 x qn matrices.

With that established, the entry dot(a,b)[i_1,...,i_(n-2),k_1,j_1,...,j_(m-2),k_2] is the k_1,k_2 entry of the matrix productdot(a[i_1,...,i_(n-2),:,:],b[j_1,...,j_(m-2),:,:]) of a pm-1 x pm with a qn-1 x qn. Notably, this product is only defined if pm=qn-1.

First try to understand the case where both arrays are 2d.

"the last axis of a and the second-to-last axis of b"How where you taught to do the matrix product by-hand? I remember tracing my finger across the columns of one array (the last dimension) and down the the rows of the other (first, or 2nd to the last for 2d).

I like the equivalent einsum notation, np.einsum('ij,jk->ik',a,b). Note where the j is.

Most articles about matrix multiplication will illustrate this across/down calculation, e.g.

Once we understand the handling of the last 2 dimension, we can address the initial ones. Note that linear-algebra does not define matrix product for "3d" arrays. MATLAB doesn't either. But numpy has extended it to handle the larger number of dimensions.

np.dot has been defined to effectively do an 'outer' product on the non-shared dimensions.

dot(a, b)[i,j,k,m] = sum(a[i,j,:] * b[k,:,m])

np.einsum('ijo,kom->ijkm', a, b)o is the sum-of-products dimension, last of a, 2nd to the last of b. The other dimensions are joined in order, ij from a, km from b. Reducing that to 2d

np.einsum('jo,om->jm', a, b)That hasn't been too useful, so more recent versions implement which treats the leading dimensions (before the last 2) as batch dimensions, with the added rule that they obey numpy's broadcasting rules.

a@b # for 3d arrays, with common leading dimension

np.einsum('ijo,iom->ikm', a, b) # i is repeatedBoth np.dot and matmul have special cases to handle 1d arrays - they are important, but I won't go into those now.