Joint Probability function in expected value of sum of 2 random variable and product of 2 random variable

Matthew Martinez

Matthew Martinez

I realized that same joint probability $f_{xy}(x,y)$ is used in calculation of expected value for sum $E[X+Y]$ and product of 2 random variables $E[XY]$.

How can we use same joint probability in totaly 2 different cases? What is the intuition here?

In case of calculation of sum, I was expecting to use $$f_{X+Y}(x,y)$$ and for calculation of product $$f_{XY}(x,y)$$

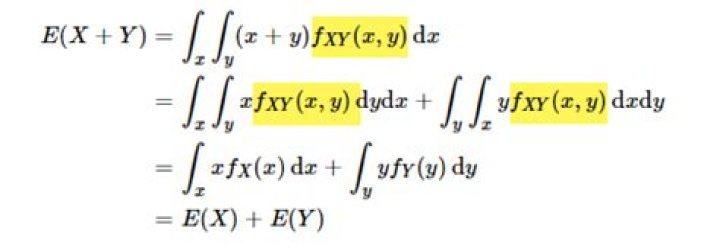

As you can see from the pictures, $f_{XY}(x,y)$ is used for both calculations.

Thank you

$\endgroup$ 12 Answers

$\begingroup$In general, if you have two random variables $X$ and $Y$ with joint density $f(x,y)$, then the expectation of the random variable $g(X,Y)$ is given by$$ E(g(X,Y))=\iint g(x,y)f(x,y)dxdy\tag{0} $$

This is sometimes called the Law of the unconscious statistician.

Notes 1.

The "density" $f_{X+Y}(x,y)$ does not makes sense. Similar for the other one. If you write $Z=X+Y$, then this is one random variable. It expectation is given by$$ E(Z)=\int zf_Z(z)dz $$if you know the density function of $Z$. The mentioned law above allows you to calculate the expectation without knowing the density $f_Z$.

Notes 2.

Given two random variables $X$ and $Y$ and a function $g:\mathbb{R}^2\to\mathbb{R}$ (with good properties), you can define another random variable $Z$ by $Z=g(X,Y)$. For instance, if $g(x,y)=x+y$, then you have $Z=X+Y$; if $g(x,y)=xy$, you have $Z=XY$.

By definition of the expectation, for any continuous random variable $W$ with density function $f_W$, you have$$ E(W)=\int xf_W(x)dx:=\int_{-\infty}^\infty xf_W(x)dx\tag{1} $$

So in the example of $Z=X+Y$, there are three ways to express its expectation.

you can use the linearity of expectation to get$$ E(X+Y)=E(X)+E(Y)=\int xf_X(x)dx+\int yf_Y(y)dy $$where $f_X$ and $f_Y$ are the density functions for $X$ and $Y$. Note that in the second integral, $y$ is a dummy variable; you can also write it as $\int xf_Y(x)dx$.

you can use (0):$$ E(X+Y)=\iint (x+y)f(x,y)dxdy $$where $f$ is the joint density function of $X$ and $Y$.

If you denote $f_Z$ as the density function for $Z=X+Y$, then$$ E(X+Y)=\int zf_Z(z)dz $$

In these three ways, the functions $f$, $f_X$, $f_Y$, $f_Z$ are related.

$\endgroup$ 3 $\begingroup$By the law of the unconscious statistician,

$$E(g(X,Y))=\int_{-\infty}^\infty\int_{-\infty}^\infty g(x,y)f_{X,Y}(x,y)dxdy$$

So in the first case

$$\begin{split}E(X+Y)&=\int_{-\infty}^\infty\int_{-\infty}^\infty(x+y)f_{X,Y}(x,y)dxdy\\ &=\int_{-\infty}^\infty\int_{-\infty}^\infty xf_{X,Y}(x,y)dxdy+\int_{-\infty}^\infty\int_{-\infty}^\infty yf_{X,Y}(x,y)dxdy\end{split}$$

Note that $\int_{-\infty}^\infty f_{X,Y}(x,y)dy=f_X(x)$, the marginal pdf of X, so that

$$\begin{split}\int_{-\infty}^\infty x\left[\int_{-\infty}^\infty f_{X,Y}(x,y)dy\right]dx+\int_{-\infty}^\infty y\left[\int_{-\infty}^\infty f_{X,Y}(x,y)dx\right]dy &=\int_{-\infty}^\infty xf_X(x)dx+\int_{-\infty}^\infty yf_Y(y)dy\\ &=E(X)+E(Y)\end{split}$$

For the second one,

$$E(XY)=\int_{-\infty}^\infty\int_{-\infty}^\infty xy f_{X,Y}(x,y)dxdy$$

Hypothetically, if X and Y are independent, then $f_{X,Y}(x,y)=f_X(x)f_Y(y)$ so that

$$\begin{split}\int_{-\infty}^\infty\int_{-\infty}^\infty xy f_{X,Y}(x,y)dxdy &=\int_{-\infty}^\infty x\left[\int_{-\infty}^\infty y f_{Y}(y)dy\right]f_X(x)dx\\ &=\int_{-\infty}^\infty xE(Y)f_X(x)dx\\ &=E(Y)\int_{-\infty}^\infty xf_X(x)dx\\ &=E(Y)E(X)\end{split}$$

$\endgroup$