Is this right way to merge LoRA weights?

Matthew Barrera

Matthew Barrera

I have fine-tuned RoBERTa using LoRA with HuggingFace libraries, produced multiple LoRA files.

I want to merge those LoRA weights without changing original model. So I wrote code like below.

from peft import ( ... PeftModel, ...

)

from transformers import ( ... RobertaForSequenceClassification ...

)

model = RobertaForSequenceClassification.from_pretrained("roberta-base")

# "lora-1" and "lora-2" are local directories.

model = PeftModel.from_pretrained(model, "lora-1")

model = model.merge_and_unload()

model = PeftModel.from_pretrained(model, "lora-2")

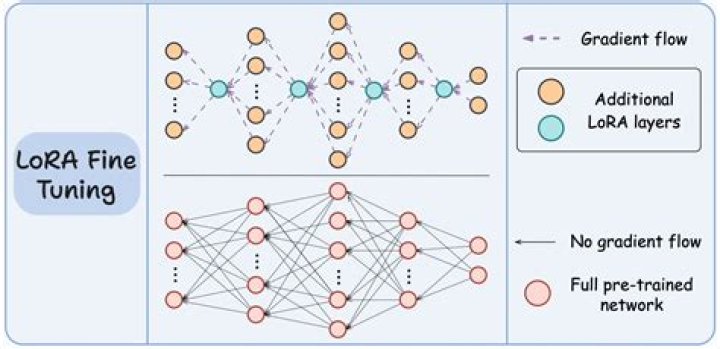

model = model.merge_and_unload()Code is working but bit suspicious because I don't know how merge_and_unload() working exactly. Guessing by name, I thought perhaps it merge all the LoRA weights to base model's weight and make it one final single model. But that's not how LoRA working, as far as I know.

To conclude, I want to produce p(phi_0 + delta phi_1(theta_1) + delta phi_2(theta_2) + ... + delta phi_n(theta_n)), not single merged p(phi_0) (Sorry for the equation text, I don't have enough reputation to link online equation SVG!)

I couldn't find any more information than using merge_and_unload().

EDIT

I found there is description about it...shame that I didn't read it.

merge_and_unload()

This method merges the LoRa layers into the base model. This is needed if someone wants to use the base model as a standalone model.So basically using merge_and_unload() is definitely not what I want.

Is there any other options for merging LoRA weights?

Any advice would be appreciated.

1 Answer

Perhaps this is what you are looking for add_weighted_adapter. You can refer to this documentation and this issue

model.add_weighted_adapter( adapters=['lora-1', 'lora-2'], weights=[0.5, 0.5], adapter_name="combined", combination_type="svd", )