How can Newton's method be used to solve for eigenvectors and eigenvalues of a given matrix?

Emily Wong

Emily Wong

I am a little confused about this question. It says one way we can compute the eigenvalue and eigenvector of a matrix is by solving for a system of non-linear equations given by:

(A - $\lambda I$)$x$ = $0$ , $x^{T} x$ = $1$

If $x_{0}$, and $\lambda_{0}$ are the initial values, then the next iteration is determined by solving for $\delta x$ and $\delta \lambda$ in the following systems(Part 1):

$A\delta x - \delta \lambda x_{0} = -(A-\lambda_{0}I)x_{0}$

-$x_{0}^{T} \delta x = \frac{1}{2}(x_{0}^{T}x_{0}-1)$

and therefore(Part 2);$x_{1} = x_{0} + \delta x $ and $\lambda_{1} = \lambda_{0} + \delta \lambda$

I am really confused about this question for a number of reasons:

- How do we apply Newton's method to matrices?

- How do we even get from part 1 to part 2?

- How is it even possible to solve for the eigenvalue and eigenvector in the equations showed above?

1 Answer

$\begingroup$Your point 1 : i.e., the way to build the general iteration step.

Let $A$ be an $n \times n$ matrix.

Here, the Newton's method will provide at the same time an eigenvalue and an associated (unit norm) eigenvector, i.e., will solve the pair of equations :

$$\begin{cases}f(x,\lambda)&:=&(A-\lambda I)x&=&0\\g(x,\lambda)&:=&x^Tx-1&=&0\end{cases}$$

(in fact $g$ is not dependent upon $\lambda$)

Let $y=\binom{x}{\lambda} \in \mathbb{R^{n+1}}$.

Therefore, the heart of the Newton scheme will be written in this way :

$$y_{k+1}=y_k-J^{-1}\binom{f(x_k,\lambda_k)}{g(x_k,\lambda_k)} \ \ \text{where} \ \ J=\begin{pmatrix}A-\lambda I&-x\\2x^T& 0\end{pmatrix}, \ \ \text{with arbitrary} \ \ y_1. \tag{1}$$

(one recognizes in $J$ the jacobian matrix of partial derivatives of $f$ and $g$ with respect to $x$ and $\lambda$ resp.)

Remarks :

a) Expression (1) is an extension to $n+1$ dimensions of the scalar case :

$$y_{k+1}=y_k-\dfrac{f(y_k)}{f'(y_k)}$$

b) It has been said that $y_1$ is arbitrary. This is not exact, as for all Newton methods. $y_1$ has to be in a convergence "basin" in order that the method converges. This convergence is towards one the eigenpairs $(x,\lambda)$ which, generally speaking, is "not to far" from the initial vector $y_1$.

c) The number of steps can be reduced in particular by stopping iterations when the norm of $(A-\lambda I)x$ is small enough.

d) This method can be twined with a so-called deflation method.

e) Here is a Matlab program implementing the method :

n=2;A=rand(n);I=eye(n); Y=rand(n+1,1); for k=1:10 % number of iteration steps x=Y(1:n);L=Y(n+1); J=[A-L*I,-x; 2*x',0]; V=[A*x-L*x; x'*x-1]; Y=Y-inv(J)*V; end; x=Y(1:n),% approx. of eigenvector L=Y(n+1),% approx. of eigenvalue [P,D]=eig(A), % comparison with "true" eigenelements

Your point 2: The iteration step in your method

Your increments $\delta x$ and $\delta \lambda$ can be gathered into $\delta y=\binom{\delta x}{\delta \lambda}$. It means, by considering relationship (1), that

$$\delta y:=\binom{\delta x}{\delta \lambda}=-J^{-1}\binom{f(x_n,\lambda_n)}{g(x_n,\lambda_n)}$$

otherwise said, that by multiplication by $J^{-1}$, that

$$J \binom{\delta x}{\delta \lambda}=-\binom{f(x_n,\lambda_n)}{g(x_n,\lambda_n)}$$

Expanding this matrix-vector relationship, one obtains :

$$\begin{cases}\color{red}{(A-\lambda I)} \delta x & - & x \delta \lambda&=&-(A-\lambda I)x\\ \ \ \ \ \ 2x \delta x &&&=&-x^tx+1\end{cases}$$

which is almost exactly the system you have, but for a discrepancy in the factor featured in red...

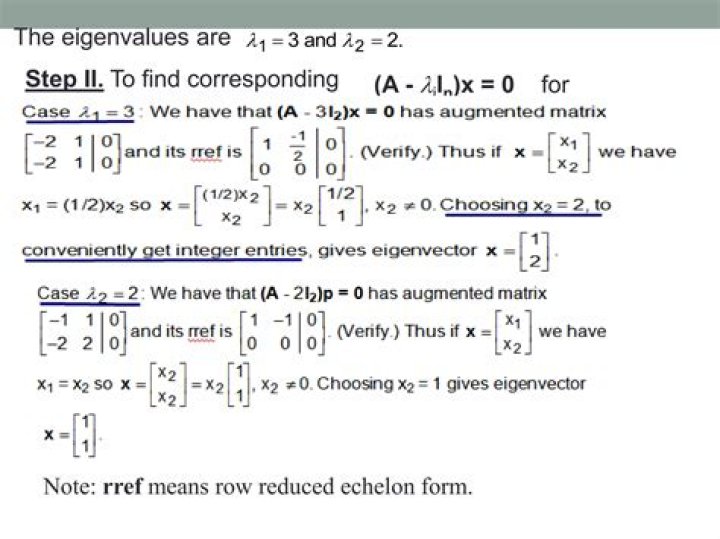

Fig. 1: The case of matrix $A=\begin{pmatrix}0&1;-2&3\end{pmatrix}$ with $\lambda_1=1$ and $\lambda_2=2$ with associated eigenvectors $\pm\binom{1/\sqrt{2}}{1/\sqrt{2}}$ and $\pm\binom{1/\sqrt{5}}{2/\sqrt{5}}$. The $\pm$ sign is important because it generates $4$ solutions instead of $2$.

$\endgroup$ 7