error: overloaded method value createDataFrame

Matthew Harrington

Matthew Harrington

I tried to create Apache Spark dataframe

val valuesCol = Seq(("Male","2019-09-06"),("Female","2019-09-06"),("Male","2019-09-07"))

valuesCol: Seq[(String, String)] = List((Male,2019-09-06), (Female,2019-09-06), (Male,2019-09-07))Schema

val someSchema = List(StructField("sex", StringType, true),StructField("date", DateType, true))

someSchema: List[org.apache.spark.sql.types.StructField] = List(StructField(sex,StringType,true), StructField(date,DateType,true))It does not work

val someDF = spark.createDataFrame(spark.sparkContext.parallelize(valuesCol),StructType(someSchema))I got error

<console>:30: error: overloaded method value createDataFrame with alternatives: (data: java.util.List[_],beanClass: Class[_])org.apache.spark.sql.DataFrame <and> (rdd: org.apache.spark.api.java.JavaRDD[_],beanClass: Class[_])org.apache.spark.sql.DataFrame <and> (rdd: org.apache.spark.rdd.RDD[_],beanClass: Class[_])org.apache.spark.sql.DataFrame <and> (rows: java.util.List[org.apache.spark.sql.Row],schema: org.apache.spark.sql.types.StructType)org.apache.spark.sql.DataFrame <and> (rowRDD: org.apache.spark.api.java.JavaRDD[org.apache.spark.sql.Row],schema: org.apache.spark.sql.types.StructType)org.apache.spark.sql.DataFrame <and> (rowRDD: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row],schema: org.apache.spark.sql.types.StructType)org.apache.spark.sql.DataFrame cannot be applied to (org.apache.spark.rdd.RDD[(String, String)], org.apache.spark.sql.types.StructType) val someDF = spark.createDataFrame(spark.sparkContext.parallelize(valuesCol),StructType(someSchema))Should I change date formatting in valuesCol? What actually causes this error?

2 Answers

With import spark.implicits._ you could convert Seq into Dataframe in place

val df: DataFrame = Seq(("Male","2019-09-06"),("Female","2019-09-06"),("Male","2019-09-07")) .toDF() // <--- HereExplicitly setting column names:

val df: DataFrame = Seq(("Male","2019-09-06"),("Female","2019-09-06"),("Male","2019-09-07")) .toDF("sex", "date") For the desired schema, you could either cast column or use a different type

//Cast

Seq(("Male","2019-09-06"),("Female","2019-09-06"),("Male","2019-09-07")) .toDF("sex", "date") .select($"sex", $"date".cast(DateType)) .printSchema()

//Types

val format = new java.text.SimpleDateFormat("yyyy-MM-dd")

Seq( ("Male", new java.sql.Date(format.parse("2019-09-06").getTime)), ("Female", new java.sql.Date(format.parse("2019-09-06").getTime)), ("Male", new java.sql.Date(format.parse("2019-09-07").getTime))) .toDF("sex", "date") .printSchema()

//Output

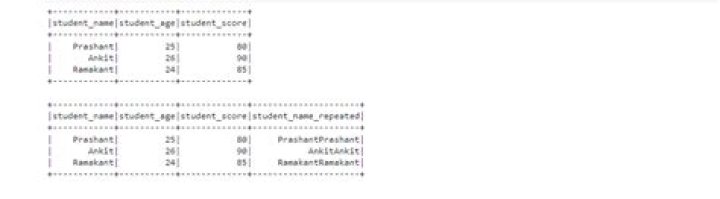

root |-- sex: string (nullable = true) |-- date: date (nullable = true)Regarding your question, your rdd type is known, Spark will create schema accordingly to it.

val rdd: RDD[(String, String)] = spark.sparkContext.parallelize(valuesCol)

spark.createDataFrame(rdd)

root |-- _1: string (nullable = true) |-- _2: string (nullable = true)You can specify your valuesCol as Seq of Row instead of Seq of Tuple :

val valuesCol = Seq( Row("Male", "2019-09-06"), Row ("Female", "2019-09-06"), Row("Male", "2019-09-07"))