Derivative to Zero, What does it intuitively mean?

Emily Wong

Emily Wong

I'm currently learning machine learning, and I came across this equation called Least Squares Regression.

X and w are both matrices. The multiplication of both matrices becomes y hat, which is theoretically supposed to be equal to y.

We want to minimize the squared error given by this equation by changing w.

w can be solved by a derivation of the function to w, and setting the equation to zero.

The question is, what does it intuitively mean?

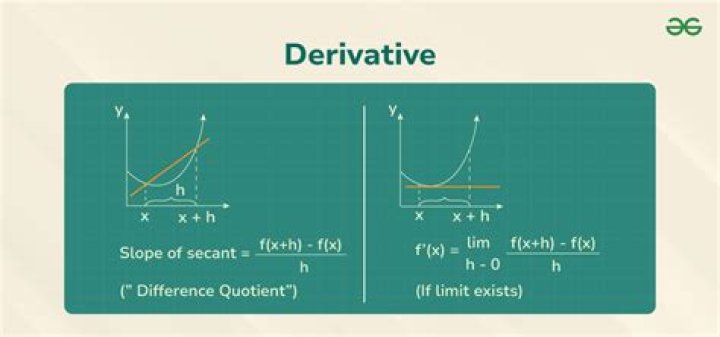

I know that in derivative, we are trying to find the rate of change. BUT what does it mean the rate of change = 0 intuitively?

$\endgroup$ 62 Answers

$\begingroup$Least squares solutions are a convex set; therefore the extremum is a minimum.

To show the set of least squares minimizers is convex, consider the linear system $Ax = b$ where the system matrix $A\in\mathbb{C}^{m\times n}$, the data vector $b\in\mathbb{C}^{m}$, and the solution vector $x\in\mathbb{C}^{n}$. The least squares solution $x_{LS}$ is defined as $$ x_{LS} = \left\{x\in\mathbb{C}^{n} \colon \lVert Ax - b \rVert_{2}^{2} \text{ is minimized} \right\}. $$

Take a vector from the null space $\eta\in\mathcal{N}(A)$. The vector $A(x+\eta) = Ax$ by virtue of null space vector membership. The convex combination of minimizers is also in the set of minimizers which proves the minimizers are a convex set.

Given $0 < \lambda \le 1$, $$ \begin{align} \lVert A(\lambda x_{LS}) + A(1 - \lambda)(x_{LS} + \eta) - b\rVert_{2}^{2} &= \lVert \lambda Ax_{LS} + A x_{LS} + \underbrace{A \eta}_{0} - \lambda A x_{LS} - \underbrace{\lambda A \eta}_{0} - b \rVert_{2}^{2} \\ &= \lVert Ax_{LS} - b \rVert_{2}^{2} \end{align} $$ Because the convex combination of minimizers in within the set of minimizers, the set is convex.

$\endgroup$ $\begingroup$The derivative of a function, $f(x)$ being zero at a point, $p$ means that $p$ is a stationary point. That is, not "moving" (rate of change is $0$). There are a few things that could happen.

Either the function has a local maximum, minimum, or saddle point. To determine which one, you need to find out what happens around the point. For example, $f(x)=x^2$ has a minimum at $x=0$, $f(x)=-x^2$ has a maximum at $x=0$, and $f(x)=x^3$ has neither. You can see this by looking at the derivative to the left and right. If there is a sign change, it's an extremum. If there's no sign change, it's a saddle point. I'll leave it to you to figure out which sign change means maximum or minimum.

$\endgroup$ 2