$-1 = 0$ by integration by parts of $\tan(x)$

Mia Lopez

Mia Lopez

I had a calculus final yesterday, and in a question we had to find a primitive of $\tan(x)$ in order to solve a differential equation.

A friend of mine forgot that such a primitive could easily be found, tried to integrate $\tan(x)$ by parts... and then arrived to the result $0 = -1$. The kind of thing you're pretty satisfied to "prove", except during an important exam. :-°

So afterwards I tried to do the same :

$$\begin{align*} \int \tan(x)dx &= \int \sin(x) \times \frac{1}{\cos(x)}dx \\[0.1in] &= -\frac{\cos(x)}{\cos(x)} - \int - \frac{\cos(x) \times \sin(x)}{\cos(x)^2}dx \\[0.1in] &= -1 + \int \tan(x)dx \end{align*}$$

And therefore we get :

$$ \int \tan(x)dx = -1 + \int \tan(x)dx \implies 0 = -1$$

What? The reasoning sounds about right to me. Could someone explain where something went wrong?

Thanks, Christophe.

$\endgroup$ 13 Answers

$\begingroup$Without even reading I answer: the antiderivatives of a function are equal only up to a an additive constant, that is any two antiderivatives will always differ by a constant on an interval.

Edit: Ok, having now read the question I confirm my suspicion, note that the symbol $\int f(x)dx$ is not a well defined function. You should interpret the symbol $\int f(x)dx$ as being an undertermined differentiable function which, once you differentiate, yields $f(x)$. Although more formally I believe it's more common to define $\int f(x)dx$ as the set of functions described above, that is, for some non degenerate interval $I$, $$\int f(x)dx=\{F\in \Bbb R^I: \text{F is differentiable and }(\forall x\in I)(F'(x)=f(x))\}.$$

Using this definition one has to very careful about what one means with $\int f(x)dx=g(x)$, because it doesn't mean what one would initially suspect.

$\endgroup$ 4 $\begingroup$Let's try using "real" primitives. Suppose $-\pi/2<x<\pi/2$; then

\begin{align}

\int_0^x \tan t\,dt

&=\left[(-\cos t)\frac{1}{\cos t}\right]_0^x

-\int_0^x (-\cos t)\frac{\sin t}{\cos^2 t}\,dt\\

&=\bigl[-1\bigr]_0^x+\int_0^x\tan t\,dt\\

&=(-1)-(-1)+\int_0^x\tan t\,dt\\

&=\int_0^x\tan t\,dt

\end{align}

which of course doesn't say much, does it? ;-)

Fixing the lower limit of integration is choosing a well determined primitive (or antiderivative). Always do it in case of doubt.

$\endgroup$ $\begingroup$I would like to say that you were able to prove that $-1 = 0$, but unfortunately, you used some (or really a lot of) bad calculus. :(

While integrating the equation

$$\int\tan(x)~dx$$

you must separate it into two parts, like you did to get $\int \frac{\sin(x)}{\cos(x)}dx$,

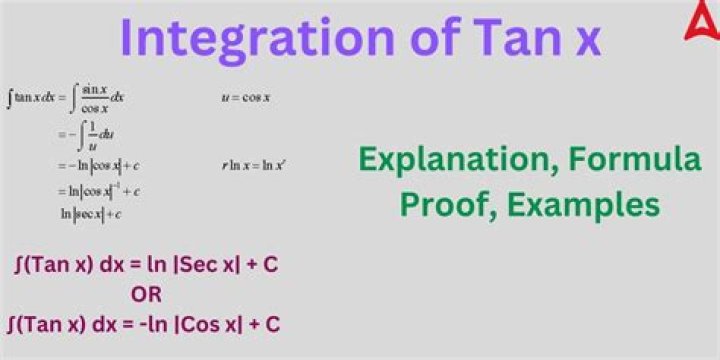

Then, you must find a $u$ and do $u$ substitution. $u = \cos(x)$, and $du = -\sin(x) dx$

then replace the $u$ and $du$ to get $\int \frac{du}{u}$, which differentiates to $\ln|u|$ where $u = \cos(x)$, so the answer is really $\ln|\cos(x)|$, not anything else then $-\ln|\cos(x)|$ or $\ln|1/\cos(x)|$ or $\ln|\sec(x)|$ with C.

In addition, it is bad calculus to put the bounds into the equation before actually differentiating, so the entire $\int \tan(x)dx =$ stuff is completly incorrect

$\endgroup$